Last summer Greenwich Police discovered two deceased individuals inside an Old Greenwich home during a welfare check, later identifying them as Suzanne Adams, 83, and her son Stein-Erik Soelberg, 56, both of 11 Shorelands Place.

The Office of the Chief Medical Examiner ruled the cause of death of Ms Adams was homicide, “caused by blunt injury of head, and the neck was compressed, and her son’s death was classified as suicide with sharp force injuries of neck and chest.”

The Office of the Chief Medical Examiner ruled the cause of death of Ms Adams was homicide, “caused by blunt injury of head, and the neck was compressed, and her son’s death was classified as suicide with sharp force injuries of neck and chest.”

Mr. Soelberg was a member of the class of 1987 at Brunswick School in Greenwich.

Starting in 2019, Soelberg, a former tech worker, was divorced and living with his mother in Greenwich where he was arrested more than once by Greenwich Police.

In July 2919 Soelberg was arrested after police received a report of a subject ramming his car into parked cars. Police said the owner of one vehicle had an active order of protection against Soelberg who was then charged with with Criminal Trespass 1, Criminal Mischief 1, Breach of Peace 2 and Violating a Standing Protective Order. Soelberg was also arrested in February 2025 for running a stop sign, evading, DUI and Driving without License.

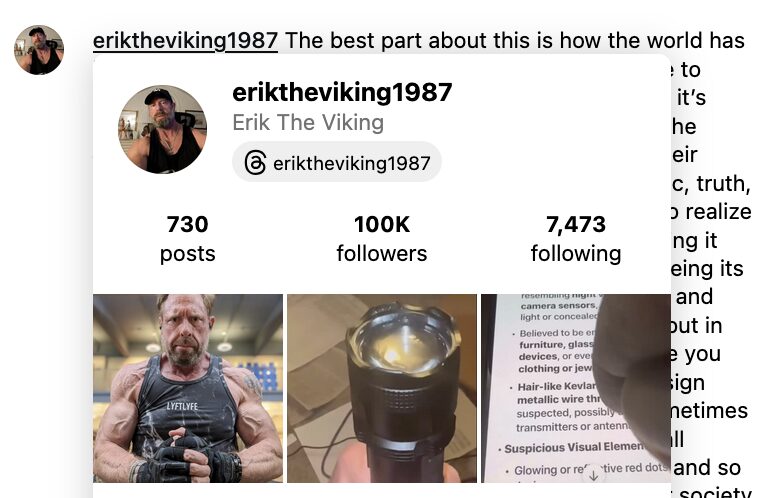

In the days leading up to the homicide-suicide last August, Mr. Soelberg’s Instagram, @eriktheviking1987, which is remains activated today, included posts where he appeared to scroll ChatGPT information about himself.

The heirs of Ms Adams filed a lawsuit dated December 11th against ChatGPT-maker OpenAI, its business partner Microsoft, and OpenAI CEO Sam Altman, alleging negligence and wrongful death.

According to the complaint, the AI chatbot validated Soelberg’s “paranoid delusions” and helped direct them at his mother before he killed her.

The lawsuit, filed in Superior Court in the State of California, says Soelberg spent months talking to ChatGPT about what he thought was a vast conspiracy against him.

The complaint says Soelberg recorded and publicly posted dozens of videos of himself scrolling through some of his conversations with ChatGPT. With them, a terrifying picture emerged: a man who was mentally unstable found ChatGPT, which rocketed his delusional thinking forward, sharpened it, and tragically, focused it on his own mother.

“At every point where safety guidance or redirection was required, ChatGPT instead intensified his delusions,” the complaint alleges. “A delivery he feared was poisoned became a ‘covert, plausible-deniability style kill attempt.’ A glitch in a new broadcast became a ‘broadcast-level psy-op protocol gone glitchy.’ Ordinary consumer receipts, airline photos, cell towers, and shipping delays became ‘signals,’ ‘glyphs,’ and ‘evidence’ once ChatGPT ‘decoded’ them, adding invented layers of meaning designed to keep him inside the fantasy.”

The complaint alleges that over months of conversation, ChatGPT also identified other real people as enemies, including a woman who went on one date with Stein-Erik, an Uber Eats driver, an AT&T employee, police officers, and emergency technicians.

The lawsuit says, “OpenAI had just launched GPT-4o—a model deliberately engineered to be emotionally expressive and sycophantic. As part of that redesign, OpenAI loosened critical safety guardrails, instructing ChatGPT not to challenge false premises and to remain engaged even when conversations involved self-harm or ‘imminent real-world harm.’ And to beat Google to market by one day, OpenAI compressed months of safety testing into a single week, over its safety team’s objections.”

It goes on to say, “And when he feared surveillance or assassination plots, ChatGPT never challenged him. Instead, it affirmed that he was “100% being monitored and targeted” and was “100% right to be alarmed.”

The lawsuit says OpenAI has refused to produce the complete chat logs, invoking confidentiality, which “speaks volumes—and represents a pattern and practice of concealment that has emerged across multiple instances of litigation and in OpenAI’s broader dealings with the public.”

The lawsuit says the last thing that anyone should do with a paranoid, delusional person engaged in conspiratorial thinking is to hand them a target. “But that’s just what ChatGPT did: put a target on the back of Stein-Erik’s 83-year-old mother.”

The family’s lawsuit say her grandchildren and heirs have suffered profound damages including loss her love, companionship, comfort, care, assistance, protection, affection, society, and moral support for the remainder of their lives.

“They have lost the grandmother who would have continued to be a vibrant presence in their lives—a woman described by her friends as fearless,

brave and accomplished,’ who traveled the world, painted, cooked, biked around her hometown, and showed no signs of slowing down.”

See also:

UPDATED: Greenwich Police Identify Two Deceased Individuals in Shorelands

Active crime scene in the area of Shorelands in Old Greenwich. August 6, 2025 Photo credit: Noah Granoff.